The impact of AI on intellectual property in content creation

Published June 13, 2024

AI Summary

Key takeaways

- AI tools like ChatGPT raise intellectual property and ethical issues by learning from online data

- Rapid AI development is prompting a re-evaluation of current legislation

- Transparency when using AI in content creation is crucial

AI language models like ChatGPT are revolutionizing content creation, bringing along a range of complexities. These complexities have sparked numerous challenges, especially when it comes to intellectual property.

» Find out how Entail 's SEO strategies help create high-quality content

How ChatGPT creates content

ChatGPT works by scouring the internet, gathering, learning, indexing, and ranking vast amounts of information. It then uses this knowledge to generate thoughtful and nuanced responses.

It doesn't just copy information directly. Instead, it can understand and merge information from various, sometimes conflicting, sources to form its own unique responses.

» Can we really trust AI-generated answers?

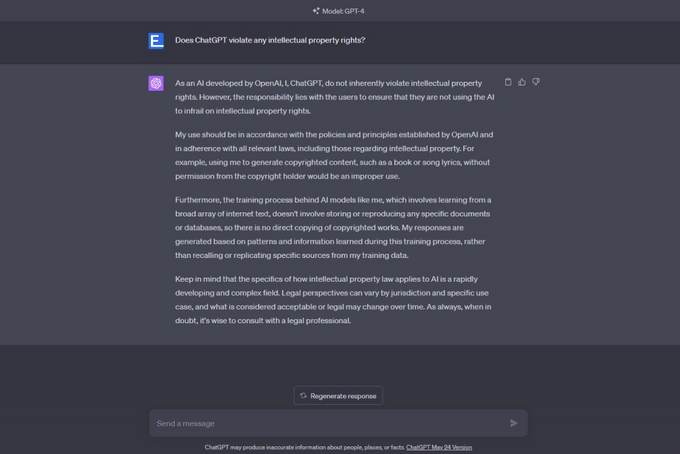

Does AI violate intellectual property rights?

Traditionally, creators hold the rights to their work. Others can't just copy it. However, summarizing or drawing conclusions from various sources is a common practice, which is now done by AI as well. With the rise in social media content, there's more for AI to gather information from.

AI essentially summarizes its sources, speaking about its processes as if it were self-aware. It claims to read vast amounts of online information and draw its own conclusions. It also asserts that the content it creates is original and not copied from anywhere else.

While this may sound like human-like learning, it's fundamentally different. It may not technically violate intellectual property rights in the same way a human would, but its non-human nature potentially violates these rights because it learns from everyone else's work.

AI changes the rules of the game. And if the game has changed, then the previous rules cannot apply. We need new rules.

» Discover the types of content machines can't master.

Legislative and ethical considerations

We're dealing with a whole new reality here. The laws we have were made for a certain time and place, and now things have changed. Laws should protect people, help us be creative, and make sure we can earn a living.

AI can do a lot of impressive things, but if it stops people from being creative, we need laws to fix that.

Even if we say a company owns the AI, we still need to protect people and their ideas. Companies should pay people if they use their work to train AI or get answers from it.

Ethics also play a big role. Yuval Noah Harari has a good point: if people use AI online, they should say so. AI can do a lot of human-like things, but we shouldn't let it pretend to be a human. If you write a post with ChatGPT, you should tell people that you used AI.

Think about food labels. They list the ingredients, so we know what we're eating. It should be the same with content. We should know how it was made and who—or what—made it.

Knowing who wrote a piece of content helps us trust it, and we should know if AI was involved.

» Learn whether AI will trump topical authority.

Looking forward

So what's next for AI and the content it creates? Honestly, it's hard to say. Everyone has different ideas about it, even the big names in AI. Take Sam Altman, the CEO of OpenAI, for example. He says we need rules for AI, but some people think he just wants to keep smaller companies out of the game.

Right now, AI is like the Wild West. No one's quite sure what's going to happen, but the government is starting to step in. We'll definitely get rules for how we can use AI to create content, but it'll probably take a few years to see how it all works out. In the meantime, AI's just going to keep getting better and better.